Meta’s New Policy on AI Training Sparks Concerns in Australia

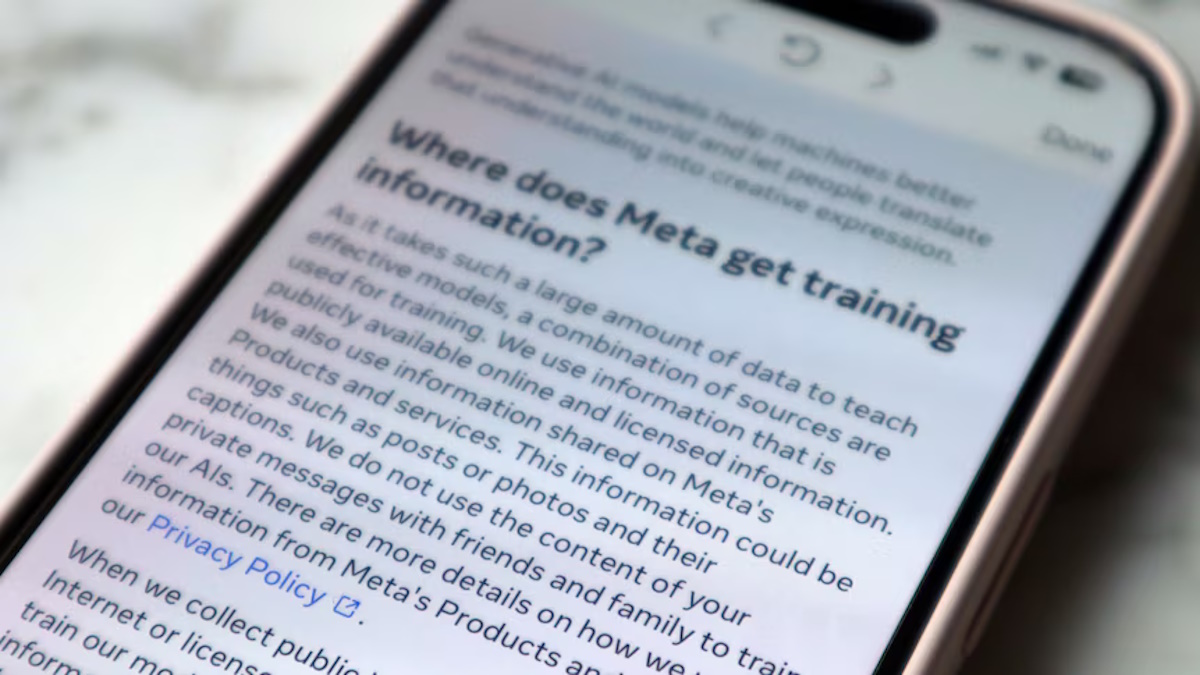

Starting June 26, Meta, the parent company of Facebook and Instagram, will begin using social activity data from as far back as 2007 to train its artificial intelligence (AI) tools. This includes posts, photos, captions, and messages to Meta’s AI chatbot. Users in Australia won’t have the option to opt out of this data usage, unlike their counterparts in the EU and certain US states.

This development has raised significant concerns among users and experts, particularly artists who fear for their creative rights and livelihoods.

The policy update is part of Meta’s effort to enhance its AI capabilities but has sparked backlash for its implications on user privacy and intellectual property. While Meta states that users give consent through the terms of service, many Australians admit they’ve never read these agreements thoroughly.

Children’s illustrator Sara Fandrey from Portugal expressed outrage upon learning about the update. She went viral on social media after posting a video demonstrating how to fill out an objection form available to EU residents, prompting others to follow suit under the hashtag #noaiart.

Artists like Sara and Sydney-based illustrator Thomas Fitzpatrick are concerned that their artistic work will be used without permission or compensation. Some are considering leaving platforms like Instagram altogether to protect their creations from being trained into AI models.

Dr. Joanne Gray from the University of Sydney explains that while Meta may justify its actions under US fair use laws, this poses a new economic threat to artists who rely on their distinctive style and work for livelihood.

In response to the backlash, advocacy groups in the EU have filed complaints against Meta, questioning the ethics and legality of such data usage practices.

As Meta continues to expand its AI capabilities, artists are exploring alternative platforms like Cara, which prioritize human creativity over AI-generated content. Cara’s user base has surged in response to Meta’s policy changes, reflecting growing concerns over data privacy and creative integrity.

While Meta asserts its commitment to responsible AI development, the ongoing debate underscores the need for clearer regulations and user protections in the evolving landscape of AI and data privacy.

Source: abc.net.au

Got a Questions?

Find us on Socials or Contact us and we’ll get back to you as soon as possible.